For the past 20 years, I have been exploring how people change their behavior. This exploration has led me down many different paths and lines of inquiry. One of the most fascinating areas of research that I’ve investigated surrounds the now hot topic of behavioral economics.

I often describe behavioral economics as the “fusion of psychology and economics in order to gain a better understanding of human behavior and decision making.”

So what do we find out when we fuse psychology and economics together?

“Humans often act in very irrational ways.”

Now that is not ground breaking news for most of us. Even when I graduated with an economics degree, I knew that people didn’t always act in rational ways – or at least I didn’t (otherwise why would I stay up watching bad T.V. until 2:30 AM when I knew I had to get up by 7:00 AM for a meeting or why would I spend a hundred dollars on a dinner out but fret over buying a steak that was over $10 at the grocery store?).

However, for many economists, that statement was hearsay. Many economic models are based on the fact that people act in rational ways to maximize their own utility (i.e., happiness). These theories stated that we might make irrational choices in the short-term, or when we don’t have enough information, or that at least your irrational behavior would be vastly different than mine so that on average, we would be rational.

The truth discovered by behavioral economics is that is not often the case. We don’t act rationally – in fact, we sometimes act exactly opposite of how an economist would think we should act.

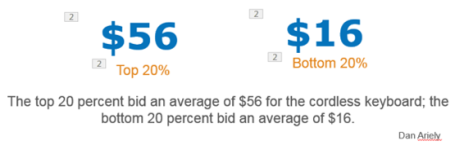

For example, research has shown that we will judge the value of an unknown item using totally irrelevant data to help us in that decision. Dan Ariely ran a wonderful study where he asked people to bid on a wireless keyboard (something that they were not very familiar with at the time), but before they answered, they had to write down the last two digits of their social security number (a totally irrelevant piece of data). The results of the bid were fascinating (top 20% being SSN that ended in 80 or above, the bottom 20% being SSN that ended in 20 or below):

This is a significant difference in how much they bid – entirely based on the last two digits of the SSN.

Here’s another one.

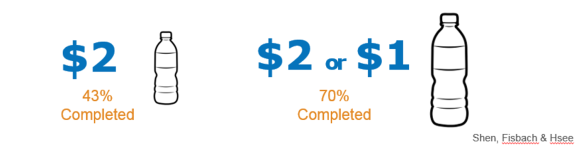

Would you work harder for a set amount (say $10) or for an uncertain amount (say 50% chance of $10 or 50% chance of $5)? Most rational people would say that they would work harder for the guaranteed payout of $10…that isn’t the case.

In a study that looked at drinking a large amount of water in two minutes – some people were offered a $2 fixed amount for finishing it – the other group was told they would earn either $1 or $2 (random chance of either). So what was the result?

43% completion rate for the certain award versus 70% completion rate for the variable? Not what you would think right?

Note – that this doesn’t apply to people choosing to participate – existing research suggests that we prefer certainty over uncertainty when deciding if we should opt-in for a goal. However, uncertainty is more powerful in boosting motivation en-route to a goal.

So what does any of this have to do with change?

We so often want to drive change in ourselves or our organizations and think through the process of this – in a rational and systematic manner. I’ve worked with companies who are baffled that they don’t see a long-term increase in employee productivity and satisfaction after they increase their wage (Hedonic Treadmill Effect). I know people who have mapped out their exercise routine for the next day, only to hit the snooze button instead of getting up and going for their morning run (Hyperbolic Discounting).

Too often we try to implement a change program based on a belief that we are rational beings.

Behavioral economics highlights that this just isn’t the case.

We need to understand that we have biases that influence our change efforts (or those of our organization) that we need to account for. We need to ensure that we are prepared to overcome these unexpected obstacles when they show up.

Take for instance how we respond based on if we frame an action as a positive or negative. In a study that looked at early registration for PhD students – would it be better to offer a discount for early registration or impose a penalty fee for late registration? It is the same time-frame and same penalty – but the response is significantly different:

We need to take into account how we talk about our change (Framing Effect), how we structure the environment up for change (Choice Architecture), how we look to validate our behaviors by looking to others (Social Proof or Bandwagon Effect), how we tend to underestimate the time it takes to accomplish a task (Planning Fallacy), how we are more motivated to avoid a loss than achieve a similar gain (Loss Aversion) and a wide host of other non-rational but common human biases.

We know that change is hard. It is even harder when we design our own or our organizations change based on a fundamentally wrong idea of our selves.

To make our change initiatives more likely to succeed we need to have a better understanding of our true selves. Behavioral economics offers us a mirror that we can look on that true being – and as such, develop more effective change strategies.

Behavioral economics is not a silver bullet – but it does help – and we need all the help we can get.

Pingback: What drives you to take 10,000 steps a day – everyday for a year! – What Motivates You?